When Three Sources Are Not Enough: Teaching Students What ‘Independent’ Really Means

Imagine that your student is writing a paper on the health effects of a food additive that has recently attracted public attention. She uses a generative AI tool to get an overview of the research.

This publication is written by Tamara N. Lewis Arredondo, Instructional Designer at The Hague Center for Teaching and Learning and researcher with the Multilevel Regulation research group at The Hague University of Applied Sciences, as part of the Ulysseus project METACOG.

Imagine that your student is writing a paper on the health effects of a food additive that has recently attracted public attention. She uses a generative AI tool to get an overview of the research. The AI provides a confident summary: the additive has been linked to digestive issues in multiple studies, and several consumer advocacy groups have called for its removal from the market.

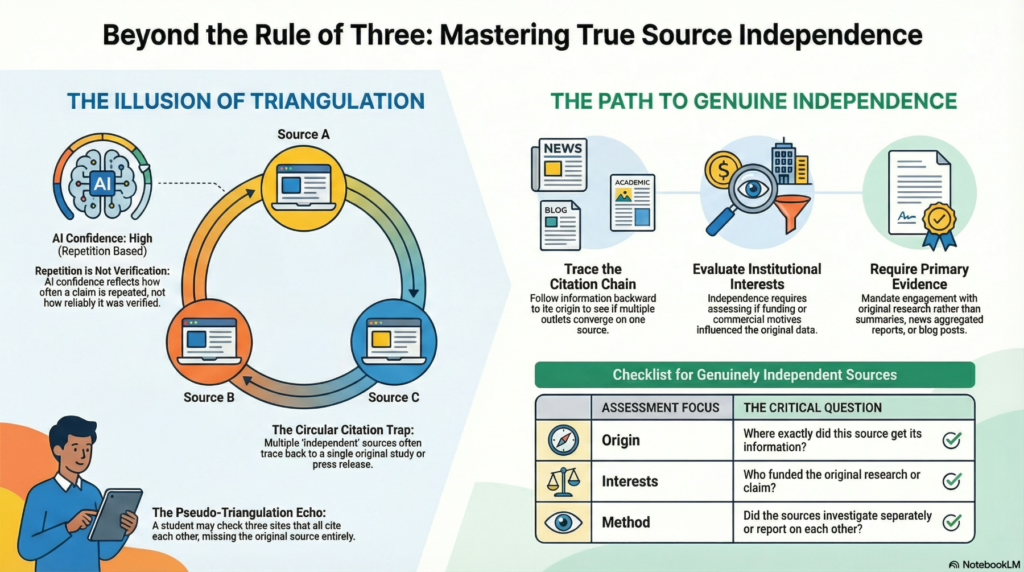

The student, having learned about triangulation, meticulously checks three sources. A popular science website confirms the claim. A well-known newspaper article says much the same. A health blog repeats the information with similar wording. Satisfied, she moves on.

There is just one problem: all three sources trace back to a single industry-funded study. The newspaper cited the popular science site. The blog cited the newspaper. None consulted primary research or regulatory assessments. The student triangulated—and still got it wrong.

Triangulation Is Necessary—But Not Always Sufficient

In a previous article for METACOG, I argued that triangulation—verifying AI-generated information through at least two independent, authoritative sources—should become a default habit for students. The case of Keus.nl, an AI-generated voting aid that produced significant errors during the 2026 Dutch municipal elections, illustrated why this matters. Students must learn that AI does not know; it predicts, synthesizes, and infers. Triangulation provides a check against confident but fabricated outputs.

But teaching triangulation is only the beginning. As the opening scenario illustrates, the concept of “independent” sources is far more complex than it first appears. Students can follow the rule—find multiple sources—while still falling into an epistemic trap.

The Illusion of Multiple Sources

In a networked information environment, sources frequently cite each other or rely on the same press release. What looks like independent confirmation is often circular. Three websites may present identical claims not because the claims have been independently verified, but because each site copied from the previous one—or because all three drew from a single original that none of them scrutinized.

This problem is amplified by generative AI. These tools are trained on a vast body of interconnected texts. When an AI tool outputs a claim, it may be synthesizing dozens of articles that all derive from one flawed study, one misinterpreted statistic, or one unverified assertion that spread virally. The AI’s confidence reflects the frequency of repetition in its training data, not the reliability of the underlying evidence. A claim repeated a thousand times is not a claim verified a thousand times.

For students, this creates a hidden danger. The AI has already performed a kind of pseudo-triangulation—synthesizing information from multiple sources—but that synthesis is invisible and unverifiable. Students who then check additional sources may simply be extending the same chain of circular citation.

What Independence Actually Requires

FTwo sources are genuinely independent when they arrive at the same conclusion through separate means: different data, different methods, different institutional interests. Independence is about the structure of evidence.

To assess whether sources are truly independent, teach your students to ask four questions. First, where did this source get its information? Tracing the citation chain backward often reveals that multiple sources converge on a single origin. Second, who funded or produced the original research or claim? A study funded by an industry group and a study funded by an independent health agency may reach similar conclusions, but they carry different epistemic weight. Third, does this source have a reason to confirm or deny the claim regardless of the evidence? Institutional interests, commercial pressures, and ideological commitments can shape how information is presented. Fourth, did the sources investigate separately, or are they reporting on each other? A newspaper article citing a blog that cited the same newspaper is not triangulation—it is an echo.

Returning to the opening scenario, imagine your student traced the health claim back to its origin. She would have discovered that the popular science site relied on a press release, the newspaper summarized the popular science site, and the blog paraphrased the newspaper. None conducted original reporting. The single study at the root of the chain was funded by a competitor of the additive’s manufacturer—a relevant detail that none of the secondary sources mentioned. This is what source independence looks like in practice: not counting links, but mapping relationships.

Teaching Source Independence in Practice

For lecturers, the challenge is translating this concept into teachable skills. Before the proliferation of AI-generated content, teaching source evaluation typically focused on assessing individual sources: Is this a credible publication? Is the author qualified? Is the information current? These questions remain important, but they are no longer sufficient. The shift toward AI-mediated research requires a relational approach—one that examines how sources connect to one another and whether they constitute genuinely independent lines of evidence.

Several strategies can help build this capacity. Citation chain assignments ask students to take a specific claim and trace it backward through sources until they reach the origin. This exercise makes the dependency structure visible. Students often discover that what appeared to be broad consensus is actually a single source refracted through multiple outlets. Source comparison exercises present students with two or three sources that appear independent and ask them to determine whether they actually are. This develops the critical reading skills necessary to detect circular citation. Primary source requirements mandate that for certain assignments, at least one source must be original research, an official document, or a firsthand account—not a news summary or aggregated report. This forces engagement with evidence rather than interpretation.

Finally, discussing institutional interests openly helps students understand that independence is not just about method but about motive. Two pharmaceutical studies may use identical protocols, but if one is funded by the drug’s manufacturer and the other by a regulatory agency, they are not epistemically equivalent. Teaching students to notice and weigh these differences is essential.

These strategies take more time than surface-level verification. But they build durable skills that transfer across contexts—academic, professional, and civic.

Why This Matters for AI-Generated Content

The urgency of teaching source independence is heightened by the nature of generative AI. Because these systems synthesize from enormous datasets, they can amplify the echo chamber effect. A claim that has been repeated across hundreds of websites—regardless of its accuracy—becomes a confident AI output. The model has no way to distinguish between repetition and verification.

Students using AI are more vulnerable to circular sourcing than students conducting traditional research. The AI has already aggregated the information; the student may then unknowingly extend that same chain. Without explicit training in source independence, triangulation becomes a ritual rather than a safeguard.

This is why teaching source independence is not simply good research practice. It is essential AI literacy.

Conclusion: From Counting Sources to Mapping Evidence

Triangulation remains a powerful strategy, but only when students understand that counting sources is not the same as verifying independence. The goal is not three links. It is three separate lines of evidence.

For lecturers, this means moving beyond “check your sources” toward teaching students how sources relate to one another. In an information environment shaped by aggregation, repetition, and AI synthesis, the ability to trace claims to their origins and assess whether evidence is genuinely independent has become foundational.

The next time your student says they verified an AI-generated claim with three sources, ask them: Were those sources truly independent? The answer may reveal whether they have mastered merely a superficial form of triangulation—or its underlying substance.

About METACOG

METACOG is an EU-funded AI literacy programme designed to combat disinformation and fake news by promoting civic engagement and shared European values.

The initiative aims to strengthen citizens’ ability—particularly among higher education students and teachers—to recognize and respond to disinformation through an innovative curriculum, AI-based tools, and best practices.

By acknowledging the dual role of artificial intelligence in both generating and countering disinformation, METACOG seeks to empower individuals with the critical thinking and digital literacy skills essential for navigating today’s information landscape.

Targeting higher education institutions, government and non-governmental organizations, media professionals, and the general public, METACOG is led by Haaga-Helia University of Applied Sciences (Finland) in collaboration with the Technical University of Košice (Slovakia), the University of Montenegro (Montenegro), and The Hague University of Applied Sciences (The Netherlands). The project is funded under the Erasmus+ Programme (KA220-HED) and will run from 1 September 2024 to 31 August 2027.