Triangulation: A Simple Strategy to Help Students Detect AI-Generated Misinformation

This publication is written by Tamara N. Lewis Arredondo, Instructional Designer at The Hague Center for Teaching and Learning as part of the Ulysseus project METACOG.

In February 2026, an AI-generated voting aid called Keus.nl made national headlines in the Netherlands. Built by a single IT professional in just a few days, the tool was used nearly 70,000 times in one week. It promised voters quick, personalized guidance for local elections across 342 municipalities. It also produced significant errors. Parties were listed as active when they had disbanded. Municipalities were confused with one another. Political positions were algorithmically inferred in ways that did not reflect reality.

The creator ultimately pulled the plug. He acknowledged that the system hallucinated—his word—failed to follow instructions, and introduced unpredictable gaps in the data even after multiple refinements. Political parties considered legal action. Local representatives warned that democracy was being undermined.

This story became personal when I realized that Keus.nl had also been used in my own city’s elections. Students, colleagues, neighbors—perhaps even some of the lecturers I work with—may have accessed inaccurate information about political parties. The possibility that voters in my own democratic community were exposed to algorithmically generated misinformation prompted me to write this reflection and share a concrete pedagogical strategy that I believe every lecturer should consider adopting.

The Problem: AI and the Illusion of Reliability

The Keus.nl case illustrates a crucial misconception: that AI-generated outputs are neutral, comprehensive, and reliably synthesized from available data. The voting aid was based on public municipal voting records from 2022 to 2026. It algorithmically derived party positions. On paper, this sounds systematic and objective.

In practice, the system confused municipalities such as Rhenen and Rheden, listed inactive parties as participating, misidentified political orientations (labeling the VVD as a religious party, for instance), failed to account for changes in party positions, and generated plausible but incorrect associations. This is the hallmark of AI hallucination: confident, fluent outputs that are partially fabricated or incorrectly inferred.

The most concerning element is not that mistakes occurred. It is that they appeared credible. A polished interface, large-scale usage numbers, and the presence of a disclaimer created an aura of legitimacy. The warning that the tool might contain errors was placed in small print. Meanwhile, the interface itself projected authority. This is precisely the kind of epistemic trap we must train students to recognize.

Why This Matters for Education

As an Instructional Designer at The Hague Center for Teaching and Learning, I work with lecturers across disciplines who are grappling with how to integrate AI into their teaching responsibly. Students are already using generative AI tools—for summarizing texts, generating ideas, drafting essays, and researching topics. If educators fail to address the misinformation potential of these systems, we tacitly endorse naive trust.

The Keus.nl case demonstrates several critical lessons. AI can produce errors even when trained on public, verifiable data. Strict instructions to the model do not eliminate hallucinations—the creator explicitly noted that his system failed to follow instructions despite repeated refinements. Disclaimers do not meaningfully protect users from misinformation. And scale amplifies impact before flaws are detected.

Students must understand that AI does not know. It predicts. It synthesizes. It approximates. It infers patterns. When those inferences extend beyond verifiable data, misinformation emerges. Educators therefore carry a responsibility to integrate AI literacy into their teaching—not as a technical skill alone, but as a critical reasoning skill.

The Strategy: Teach Triangulation as a Default Habit

If there is one powerful and practical strategy I recommend to lecturers, it is this: normalize triangulation as a default behavior.

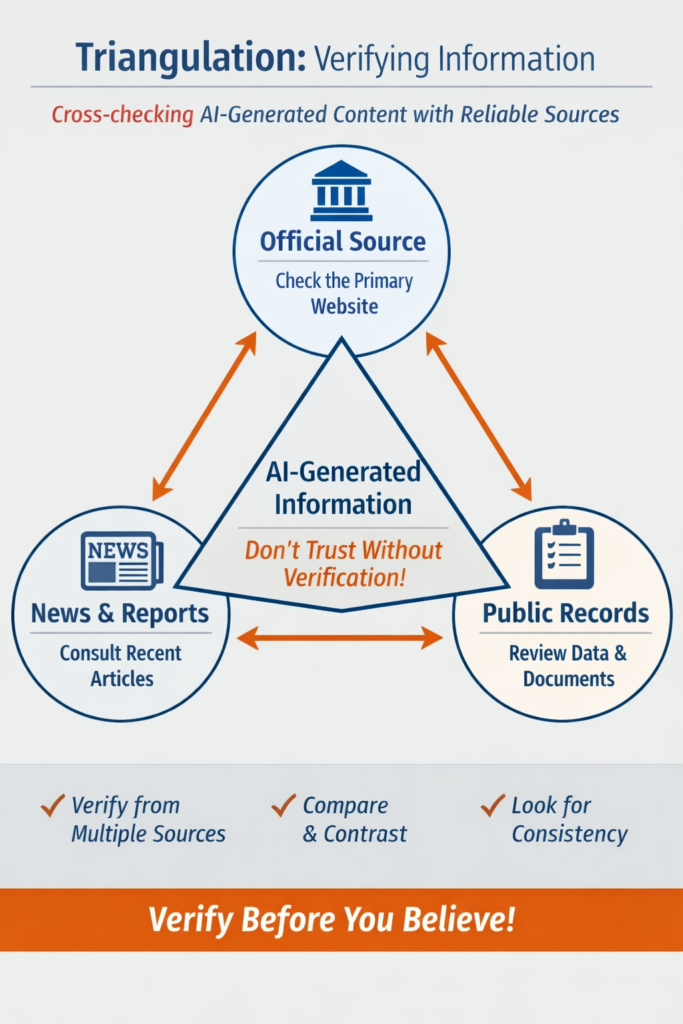

Triangulation means verifying AI-generated information through at least two independent, authoritative sources before treating it as reliable. The principle is simple, but the pedagogical implementation requires intentionality.

Here are concrete ways to embed triangulation into your teaching practice:

- Require source documentation. When students use AI to research a topic, ask them to list the sources they used to verify the information. This makes verification visible and accountable.

- Integrate verification into assessment rubrics. Design assignments where part of the grading explicitly assesses verification steps, not just final answers. Students quickly learn that the process matters as much as the product.

- Model the process live. Generate an AI answer in class and then collectively fact-check it. Walk students through how you identify claims, select appropriate sources, and evaluate whether the information holds up. This demonstration normalizes skepticism without demonizing the technology.

For example, if AI provides a summary of a political party’s stance, students should be trained to visit the party’s official website, consult recent news coverage, and check municipal voting records directly. By embedding triangulation into assessment design, educators make verification habitual. Over time, students internalize the practice.

This shifts AI from being an authority to being a starting point.

From Digital Skills to Epistemic Awareness

The Keus.nl case highlights a broader issue: technological fluency does not equal epistemic literacy. A tool can be well-coded, widely used, and still epistemically unreliable. As John Bijl of the Perikles Institute observed, voting advice tools are powerful instruments that must be handled with care.

The creator of Keus.nl himself drew a connection to the Dutch childcare benefits scandal (toeslagenaffaire), which demonstrated the dangers of uncritical reliance on algorithmic systems. In both cases, the lesson is the same: algorithms are not self-correcting moral agents. They require oversight, transparency, and critical scrutiny

As lecturers—especially in cities like The Hague, home to international courts, government institutions, and political decision-making—we operate in an environment where information integrity matters profoundly. If our students cannot detect misinformation in something as locally relevant as municipal elections, how will they navigate global political discourse?

Conclusion: A Local Incident, A Universal Lesson

Discovering that an AI-generated voting aid containing errors was used in my own city’s elections was unsettling. It brought abstract discussions about AI ethics into immediate proximity. The issue was no longer theoretical. It was local, civic, and real.

The Keus.nl episode illustrates that misinformation in the age of AI is not only about malicious actors. It can emerge from experimentation, optimism, and technical enthusiasm. It can spread quickly. It can influence real decisions.

For educators, this is a call to action. Teaching students to triangulate—to verify AI-generated claims through independent sources before accepting them—is not a burden. It is a gift. It equips them with a habit of mind that will serve them in every domain: academic, professional, and civic.

As the creator of Keus.nl concluded: human oversight remains necessary. But so does human judgment. And cultivating that judgment is ultimately what education is for.

Sources consulted for this article:

https://www.bd.nl/waalwijk/partij-in-waalwijk-slaat-alarm-over-ai-kieswijzer-vol-fouten-deze-misleidende-informatie-is-kwalijk~a7100cf9/?referrer=https%3A%2F%2Fwww.google.com%2F

https://keus.nl/pers

About METACOG

METACOG is an EU-funded AI literacy programme designed to combat disinformation and fake news by promoting civic engagement and shared European values.

The initiative aims to strengthen citizens’ ability—particularly among higher education students and teachers—to recognize and respond to disinformation through an innovative curriculum, AI-based tools, and best practices.

By acknowledging the dual role of artificial intelligence in both generating and countering disinformation, METACOG seeks to empower individuals with the critical thinking and digital literacy skills essential for navigating today’s information landscape.

Targeting higher education institutions, government and non-governmental organizations, media professionals, and the general public, METACOG is led by Haaga-Helia University of Applied Sciences (Finland) in collaboration with the Technical University of Košice (Slovakia), the University of Montenegro (Montenegro), and The Hague University of Applied Sciences (The Netherlands). The project is funded under the Erasmus+ Programme (KA220-HED) and will run from 1 September 2024 to 31 August 2027.